This past year I’ve made some great strides in relying more off FOSS (Free and Open Source Software). A few reasons for moving toward an all-foss software stack are:

- Free – Of course we like free! I wanted to avoid monthly subscriptions for simple services.

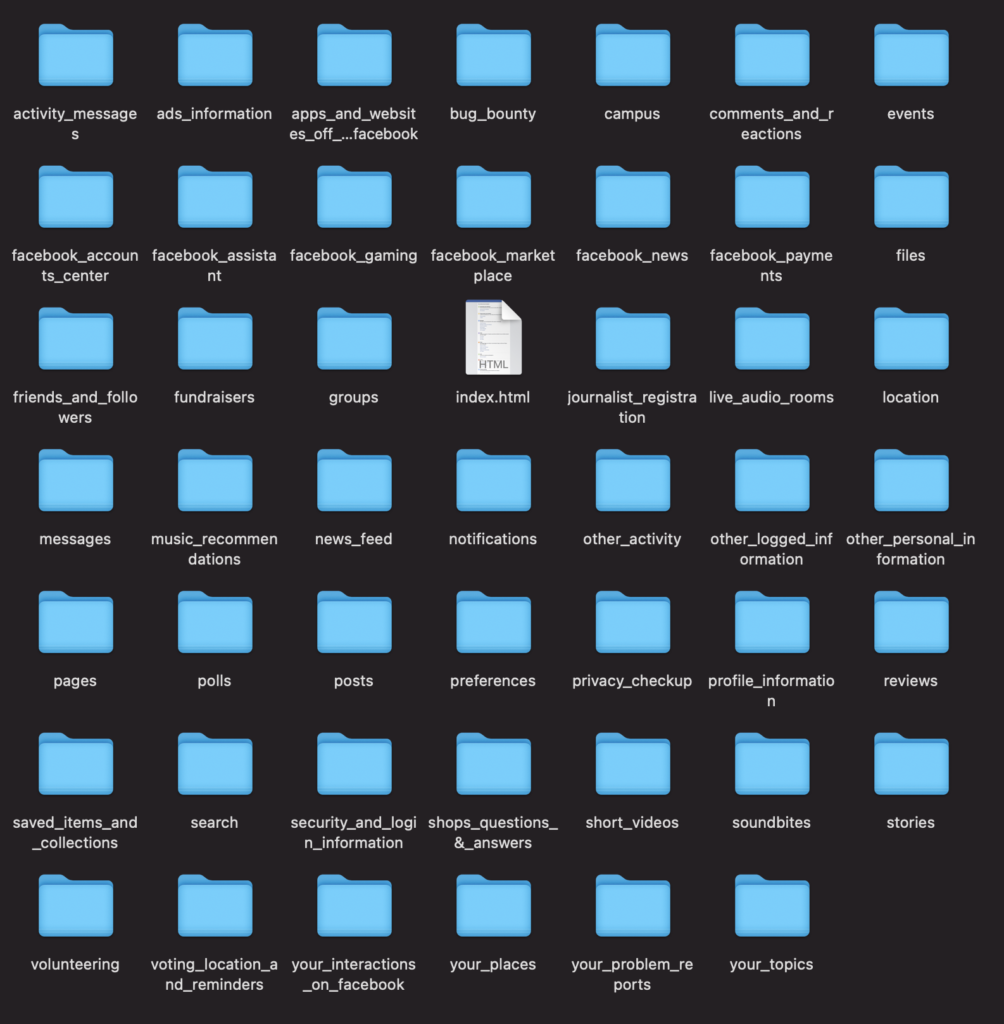

- Privacy – I’d prefer to not have my data on large company servers – Also why I prefer Self-Hosting.

- Auditable code – I don’t often audit the code, but on occasion I do!

- Contributable code – If i want a feature, I can contribute it – or easily request it on a git repository

To aid my future self (and others) in moving more toward FOSS alternatives, I’ve began compiling a list of FOSS android apps on github.

For the most up to date list see: https://github.com/meichthys/go_foss_android

For reference, here is the list as of 2024-01-16:

📱 My FOSS Andriod Apps

After much search and peril I have compiled this list which I believe to be the best set of FOSS Android apps that support my (and hopefully most people’s) full software requirements.

⚠️ This list does not try to list ALL foss android apps (see offa/android-foss), but rather the best app in each category that fits <i>my</i> daily use case.

📋 The List

| App | Type | Shields | Permission<sup>1</sup> | Notes |

|---|---|---|---|---|

Obtainium Obtainium |

App Store |    |

🌐<br />🔔 | Use this to Install the rest |

AndBible AndBible |

Bible |    |

🌐<br />🔔 | |

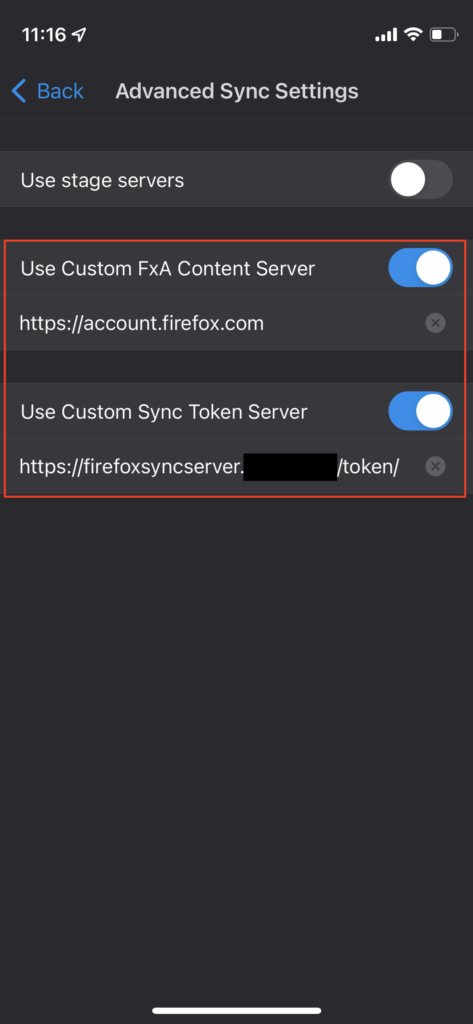

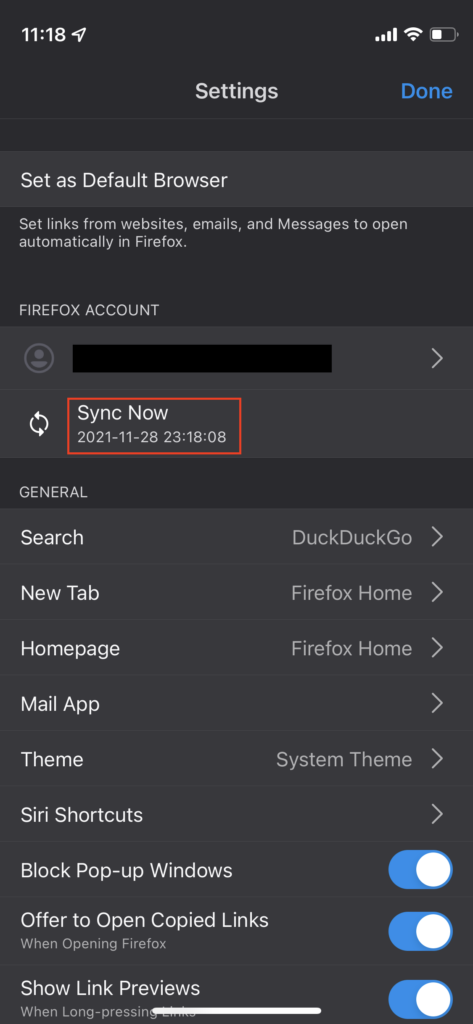

Firefox Firefox |

Browser |    |

📷📍🎤🌐🔔 | Extensions make this browser very powerful – and we need Firefox to survive! |

SimpleCalendar SimpleCalendar |

Calendar |    |

🗓️🔔📇 | Nice compact month view<br />Use with DavX5 |

OpenCamera OpenCamera |

Camera |   |

📷🎤 | Currently No FOSS cameras support live/motion photos |

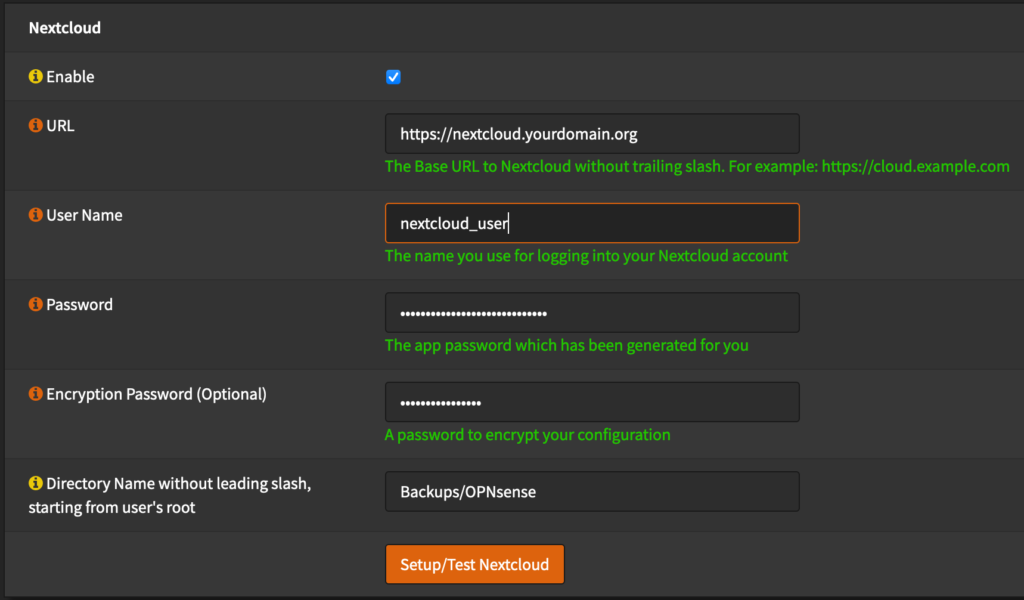

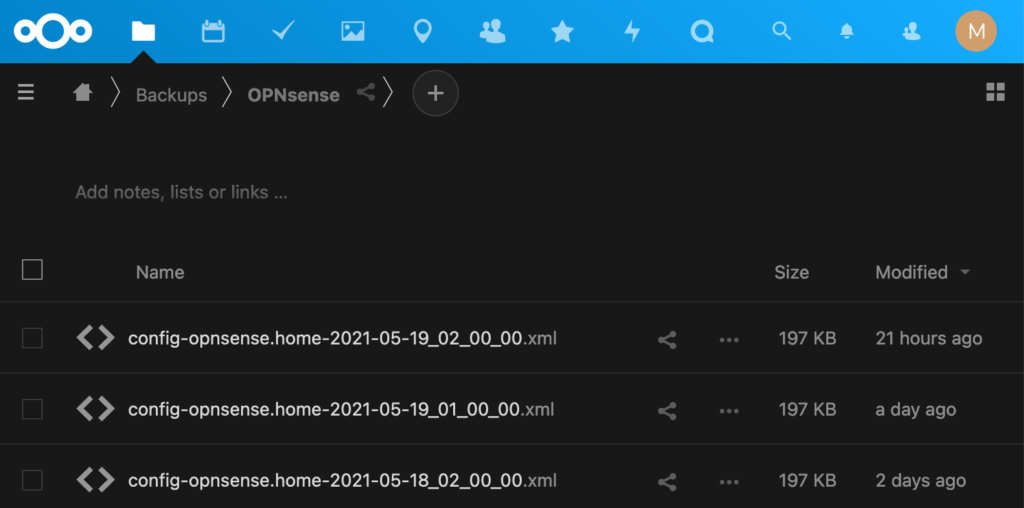

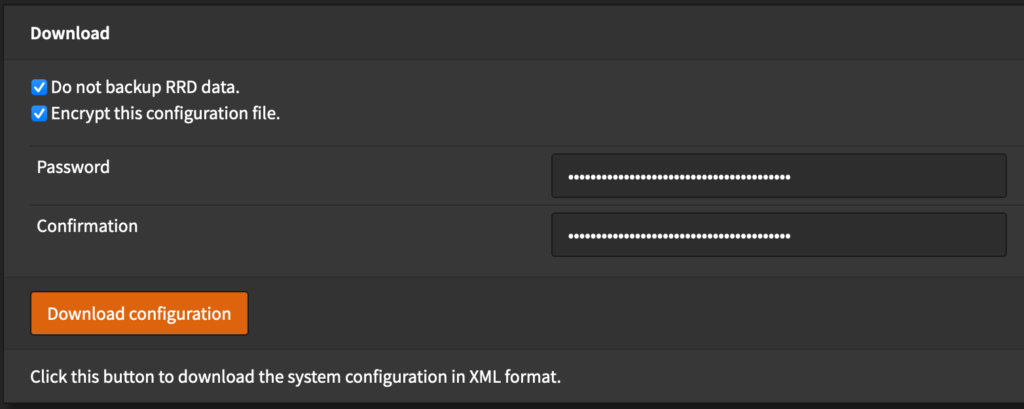

Nextcloud Nextcloud |

Cloud Storage |    |

📷🌐🔔 | Requires DAV Server (Nextcloud) |

FairEmail FairEmail |

|

🗓️📇🌐🔔 | So. Many. Features. | |

DavX<sup>5</sup> DavX<sup>5</sup> |

DAV Sync |    |

🗓️📇🌐🔔 | Requires DAV Server (Nextcloud)<br />Use with Calendar, Contacts, and Tasks |

|

Desktop Integration |    |

🗓️📇📂🌐🔔📞 | |

OSSDocumentScanner OSSDocumentScanner |

Document Scanner |    |

📷🌐 | |

SimpleFileManager SimpleFileManager |

File Manager |    |

📂 | |

Aves Aves |

Gallery |    |

🌐🖼️ | |

OpenBoard OpenBoard |

Keyboard |    |

📇 | |

Kvaesitso Kvaesitso |

Launcher |    |

🗓️📇📍🌐 | Also seethis great comparison of other foss launchers. |

Signal Signal |

Messaging |    |

📷📇📍🎤🌐🔔📞🖼️ | |

NextcloudNotes NextcloudNotes |

Notes |    |

🌐 | Requires Nextcloud Server<br /><br /><br />Non-Server Alternative: OmniNote |

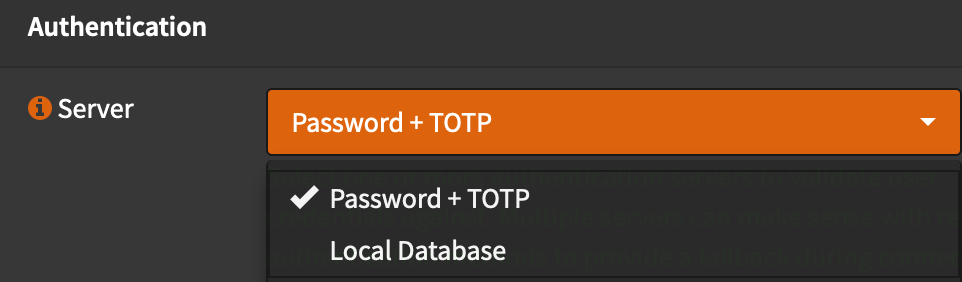

Bitwarden Bitwarden |

Password Manager |    |

📷🌐🔔 | Requires Bitwarden Server Instance (SeeVaultwarden) |

SimpleDialer SimpleDialer |

Phone / Dialer |    |

📷📇🎤🌐🔔📞 | |

AudioBookshelf AudioBookshelf |

Podcast / AudioBooks |    |

🌐 | RequiresAudiobookshelf Server Instance<br /><br /><br />Non-Server Alternative: Podverse |

Nextcloud News Nextcloud News |

RSS Reader |    |

🌐🔔 | Requires Nextcloud instance<br /><br />Non-Server Alernative: ReadYou |

QKSMS QKSMS |

SMS |    |

📇🌐🔔📞 | Project may be abandoned(?) |

Tasks Tasks |

Tasks |    |

🗓️📍🌐🔔 | Use with DavX5 to sync CalDav Tasks |

omWeather omWeather |

Weather |    |

📍🌐 | Extended Forecast & Radar |

- 🗓️:Calendar 📷:Camera 📇:Contacts 📂:Files 📍:Location 🎤:Microphone 🌐:Network 🔔:Notifications 📞:Phone 🖼️:Photos/Videos

📢 Acknowledgements

Much of my perilous search was improved by the following resources:

🛠️ Contributing

Contributions are welcome, but please keep in mind, this list is an ‘opinionated’ list on which I will make the final determination. Suggestions for different apps are highly recommended since I may have missed some apps (or app features) in my perilous search.

🗒️ License: MIT

~ If you had to guess, what percentage of software that you use is FOSS? My rough estimate is about 80%.